Physical Science Reading and Study Workbook Chapter 23

A paper'south "Methods" (or "Materials and Methods") section provides information on the study'south blueprint and participants. Ideally, information technology should be so clear and detailed that other researchers tin can repeat the report without needing to contact the authors. Y'all will need to examine this section to decide the study's strengths and limitations, which both bear upon how the study's results should be interpreted.

Demographics

The "Methods" section unremarkably starts by providing information on the participants, such equally age, sex activity, lifestyle, health condition, and method of recruitment. This data will assist you make up one's mind how relevant the study is to you lot, your loved ones, or your clients.

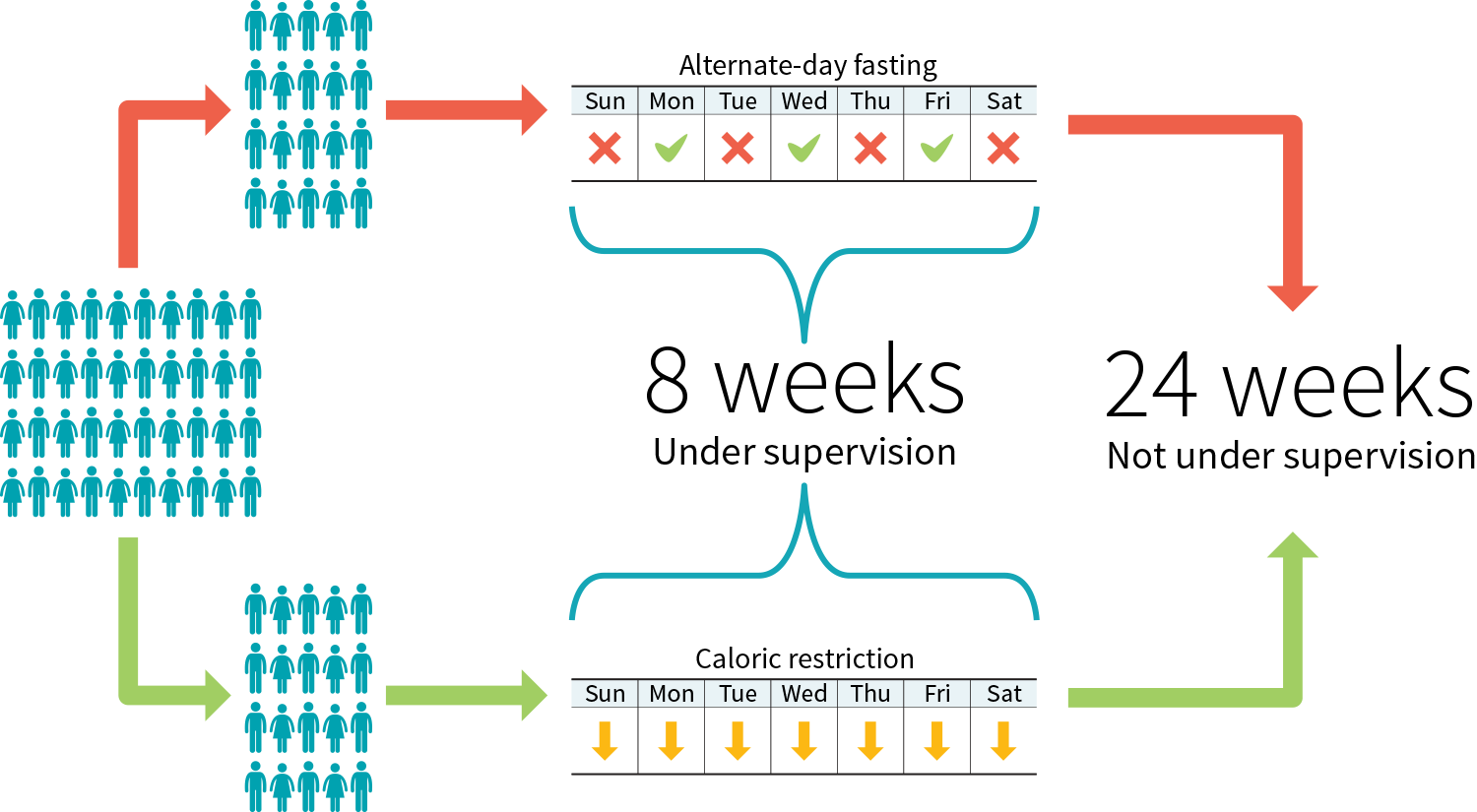

Figure 3: Example written report protocol to compare 2 diets

The demographic information can exist lengthy, you might be tempted to skip information technology, yet it affects both the reliability of the report and its applicability.

Reliability. The larger the sample size of a study (i.e., the more participants it has), the more reliable its results. Note that a study often starts with more participants than information technology ends with; diet studies, notably, commonly meet a fair number of dropouts.

Applicability. In health and fettle, applicability ways that a compound or intervention (i.e., exercise, diet, supplement) that is useful for ane person may exist a waste product of coin — or worse, a danger — for some other. For example, while creatine is widely recognized as safe and effective, there are "nonresponders" for whom this supplement fails to improve practice functioning.

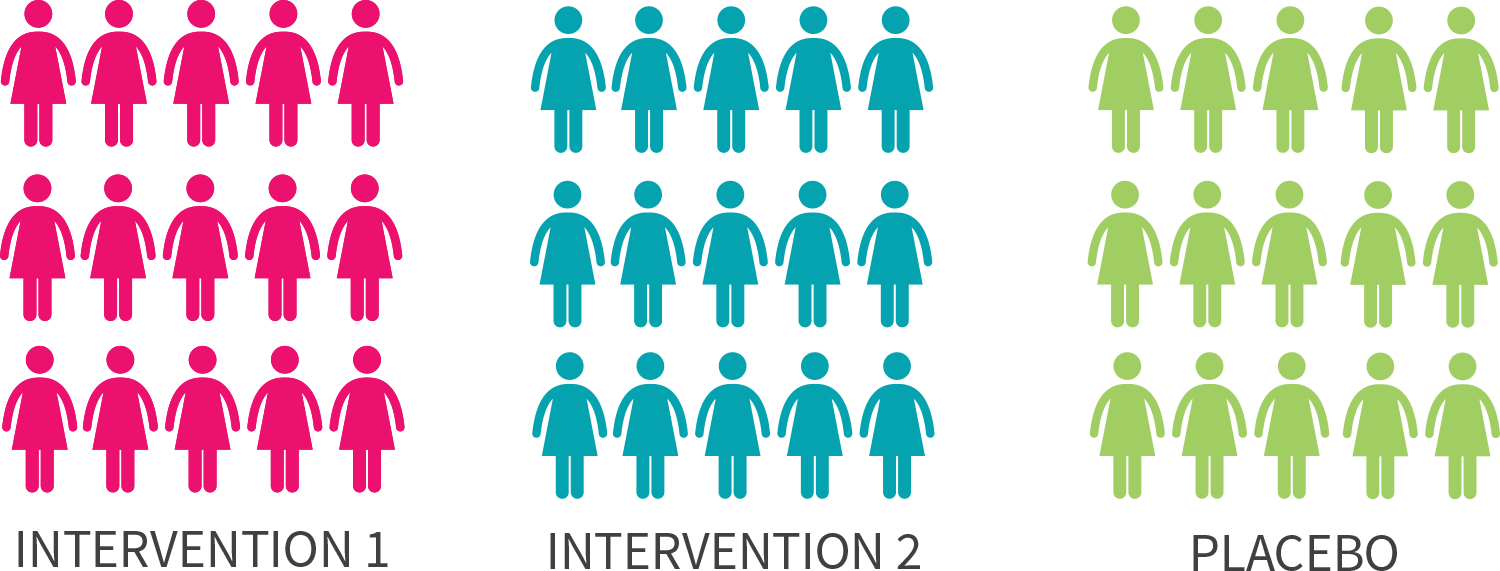

Your mileage may vary, as the creatine example shows, however a study'south demographic information can help you assess this study's applicability. If a trial simply recruited men, for example, women reading the study should continue in mind that its results may be less applicable to them. Likewise, an intervention tested in college students may yield dissimilar results when performed on people from a retirement facility.

Figure 4: Some trials are sex-specific

Furthermore, different recruiting methods will attract different demographics, and then can influence the applicability of a trial. In almost scenarios, trialists will employ some form of "convenience sampling". For example, studies run by universities volition often recruit among their students. All the same, some trialists will employ "random sampling" to brand their trial's results more than applicative to the general population. Such trials are generally called "augmented randomized controlled trials".

Confounders

Finally, the demographic data will usually mention if people were excluded from the written report, and if then, for what reason. Virtually often, the reason is the being of a confounder — a variable that would confound (i.eastward., influence) the results.

For case, if you study the upshot of a resistance training program on muscle mass, you don't want some of the participants to take muscle-building supplements while others don't. Either y'all'll want all of them to have the same supplements or, more likely, you'll desire none of them to take whatever.

Likewise, if y'all report the effect of a muscle-building supplement on muscle mass, you don't want some of the participants to exercise while others exercise not. Yous'll either desire all of them to follow the aforementioned workout plan or, less probable, you'll want none of them to exercise.

It is of course possible for studies to have more than two groups. You could have, for example, a study on the effect of a resistance training programme with the following iv groups:

-

Resistance preparation plan + no supplement

-

Resistance training program + creatine

-

No resistance grooming + no supplement

-

No resistance training + creatine

Just if your study has iv groups instead of two, for each grouping to continue the same sample size you demand twice as many participants — which makes your study more hard and expensive to run.

When you come correct down to it, any differences between the participants are variable and thus potential confounders. That'south why trials in mice use specimens that are genetically very shut to one another. That's likewise why trials in humans seldom endeavour to test an intervention on a various sample of people. A trial restricted to older women, for instance, has in effect eliminated age and sex activity as confounders.

As we saw above, with a bully enough sample size, we can have more groups. We tin fifty-fifty create more groups later the study has run its form, by performing a subgroup analysis. For example, if yous run an observational report on the result of scarlet meat on thousands of people, you can after separate the information for "male" from the data for "female" and run a separate analysis on each subset of data. However, subgroup analyses of these sorts are considered exploratory rather than confirmatory and could potentially pb to imitation positives. (When, for instance, a claret examination erroneously detects a disease, it is called a false positive.)

Design and endpoints

The "Methods" section will also depict how the written report was run. Blueprint variants include single-blind trials, in which only the participants don't know if they're receiving a placebo; observational studies, in which researchers only detect a demographic and take measurements; and many more. (Encounter figure two above for more than examples.)

More specifically, this is where yous will larn about the length of the written report, the dosages used, the conditioning regimen, the testing methods, and so on. Ideally, every bit we said, this information should exist so clear and detailed that other researchers tin repeat the study without needing to contact the authors.

Finally, the "Methods" section can also make clear the endpoints the researchers volition be looking at. For instance, a study on the furnishings of a resistance grooming program could apply muscle mass as its primary endpoint (its chief benchmark to gauge the outcome of the study) and fat mass, strength functioning, and testosterone levels every bit secondary endpoints.

One trick of studies that desire to find an outcome (sometimes and then that they tin can serve every bit marketing material for a product, only frequently merely because studies that show an event are more likely to get published) is to collect many endpoints, then to make the paper nigh the endpoints that showed an upshot, either past downplaying the other endpoints or by not mentioning them at all. To preclude such "data dredging/fishing" (a method whose devious efficacy was demonstrated through the hilarious chocolate hoax), many scientists push for the preregistration of studies.

Sniffing out the tricks used by the less scrupulous authors is, alas, role of the skills you'll need to develop to assess published studies.

Interpreting the statistics

The "Methods" department normally concludes with a hearty statistics discussion. Determining whether an advisable statistical analysis was used for a given trial is an unabridged subject field, and so we advise yous don't sweat the details; try to focus on the big picture.

Commencement, let'due south clear up 2 common misunderstandings. You may accept read that an event was significant, only to afterward observe that it was very small. Similarly, you may have read that no effect was institute, yet when you read the paper you found that the intervention group had lost more weight than the placebo group. What gives?

The problem is simple: those quirky scientists don't speak like normal people do.

For scientists, significant doesn't mean important — it means statistically pregnant. An effect is meaning if the data collected over the grade of the trial would be unlikely if there actually was no result.

Therefore, an effect tin be significant yet very pocket-sized — 0.2 kg (0.5 lb) of weight loss over a yr, for instance. More to the point, an effect can be significant notwithstanding not clinically relevant (significant that it has no discernible effect on your health).

Relatedly, for scientists, no outcome usually means no statistically significant upshot. That's why you may review the measurements collected over the grade of a trial and detect an increase or a decrease all the same read in the determination that no changes (or no effects) were found. In that location were changes, but they weren't significant. In other words, in that location were changes, only so pocket-sized that they may be due to random fluctuations (they may also be due to an actual issue; we tin't know for sure).

We saw before, in the "Demographics" section, that the larger the sample size of a study, the more reliable its results. Relatedly, the larger the sample size of a study, the greater its ability to notice if small effects are pregnant. A small change is less likely to exist due to random fluctuations when plant in a study with a thousand people, let's say, than in a study with ten people.

This explains why a meta-analysis may find meaning changes by pooling the data of several studies which, independently, found no significant changes.

P-values 101

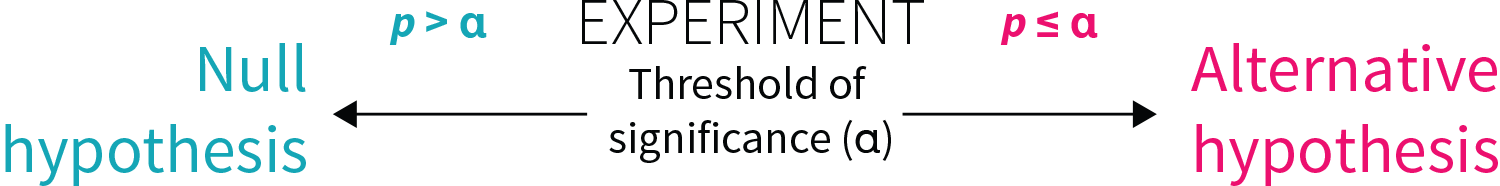

Nearly oft, an outcome is said to exist significant if the statistical analysis (run by the researchers mail service-study) delivers a p-value that isn't higher than a certain threshold (set by the researchers pre-study). We'll phone call this threshold the threshold of significance.

Understanding how to translate p-values correctly can be tricky, even for specialists, but hither's an intuitive way to think about them:

Call back about a coin toss. Flip a coin 100 times and you will get roughly a l/50 split of heads and tails. Not terribly surprising. But what if you flip this coin 100 times and get heads every time? Now that's surprising! For the record, the probability of it actually happening is 0.00000000000000000000000000008%.

You tin can think of p-values in terms of getting all heads when flipping a coin.

-

A p-value of v% (p = 0.05) is no more than surprising than getting all heads on four money tosses.

-

A p-value of 0.five% (p = 0.005) is no more surprising than getting all heads on eight coin tosses.

-

A p-value of 0.05% (p = 0.0005) is no more surprising than getting all heads on 11 coin tosses.

Contrary to popular belief, the "p" in "p-value" does not stand for "probability". The probability of getting 4 heads in a row is half dozen.25%, non 5%. If y'all want to convert a p-value into coin tosses (technically chosen Southward-values) and a probability percentage, check out the converter here.

Equally we saw, an effect is meaning if the data collected over the form of the trial would exist unlikely if there really was no event. Now we can add that, the lower the p-value (under the threshold of significance), the more than confident we can be that an effect is significant.

P-values 201

All right. Fair warning: we're going to go nerdy. Well, nerdier. Feel free to skip this section and resume reading here.

Still with united states of america? All correct, so — permit'south get at it. As we've seen, researchers run statistical analyses on the results of their written report (usually one analysis per endpoint) in club to make up one's mind whether or non the intervention had an effect. They commonly make this decision based on the p-value of the results, which tells you how likely a outcome at least as extreme every bit the one observed would be if the cypher hypothesis, among other assumptions, were truthful.

Ah, jargon! Don't panic, we'll explain and illustrate those concepts.

In every experiment there are more often than not two opposing statements: the null hypothesis and the culling hypothesis. Allow's imagine a fictional written report testing the weight-loss supplement "Meliorate Weight" against a placebo. The two opposing statements would wait similar this:

-

Nothing hypothesis: compared to placebo, Better Weight does not increase or decrease weight. (The hypothesis is that the supplement's issue on weight is zip.)

-

Alternative hypothesis: compared to placebo, Better Weight does subtract or increase weight. (The hypothesis is that the supplement has an issue, positive or negative, on weight.)

The purpose is to see whether the effect (here, on weight) of the intervention (here, a supplement chosen "Better Weight") is improve, worse, or the same as the effect of the control (here, a placebo, but sometimes the command is some other, well-studied intervention; for instance, a new drug can be studied against a reference drug).

For that purpose, the researchers usually fix a threshold of significance (α) before the trial. If, at the cease of the trial, the p-value (p) from the results is less than or equal to this threshold (p ≤ α), there is a pregnant difference between the effects of the two treatments studied. (Remember that, in this context, significant means statistically significant.)

Figure five: Threshold for statistical significance

The most ordinarily used threshold of significance is 5% (α = 0.05). Information technology means that if the nothing hypothesis (i.e., the idea that at that place was no divergence between treatments) is true, so, after repeating the experiment an infinite number of times, the researchers would get a false positive (i.e., would detect a meaning effect where there is none) at most 5% of the fourth dimension (p ≤ 0.05).

By and large, the p-value is a mensurate of consistency between the results of the study and the idea that the ii treatments take the same result. Permit's run into how this would play out in our Better Weight weight-loss trial, where one of the treatments is a supplement and the other a placebo:

-

Scenario 1: The p-value is 0.80 (p = 0.80). The results are more than consistent with the null hypothesis (i.e., the idea that at that place is no divergence between the ii treatments). We conclude that Improve Weight had no significant effect on weight loss compared to placebo.

-

Scenario ii: The p-value is 0.01 (p = 0.01). The results are more consequent with the culling hypothesis (i.east., the idea that there is a difference between the two treatments). We conclude that Meliorate Weight had a significant effect on weight loss compared to placebo.

While p = 0.01 is a pregnant outcome, so is p = 0.000001. So what information do smaller p-values offering us? All other things existence equal, they give us greater confidence in the findings. In our example, a p-value of 0.000001 would give us greater confidence that Ameliorate Weight had a significant effect on weight modify. Only sometimes things aren't equal between the experiments, making straight comparison betwixt two experiment's p-values catchy and sometimes downright invalid.

Even if a p-value is significant, call up that a significant effect may not be clinically relevant. Let's say that we institute a significant result of p = 0.01 showing that Better Weight improves weight loss. The catch: Better Weight produced merely 0.2 kg (0.5 lb) more than weight loss compared to placebo after one yr — a deviation besides pocket-sized to have whatsoever meaningful consequence on health. In this example, though the event is significant, statistically, the real-globe effect is also small to justify taking this supplement. (This type of scenario is more likely to take place when the study is large since, as nosotros saw, the larger the sample size of a written report, the greater its ability to find if minor effects are significant.)

Finally, we should mention that, though the nigh commonly used threshold of significance is 5% (p ≤ 0.05), some studies require greater certainty. For instance, for genetic epidemiologists to declare that a genetic clan is statistically significant (say, to declare that a gene is associated with weight gain), the threshold of significance is ordinarily prepare at 0.0000005% (p ≤ 0.000000005), which corresponds to getting all heads on 28 coin tosses. The probability of this happening is 0.00000003%.

P-values: Don't worship them!

Finally, keep in heed that, while important, p-values aren't the terminal say on whether a study'due south conclusions are accurate.

Nosotros saw that researchers too eager to notice an effect in their study may resort to "data fishing". They may also try to lower p-values in various ways: for instance, they may run dissimilar analyses on the same data and only report the significant p-values, or they may recruit more and more participants until they get a statistically meaning result. These bad scientific practices are known as "p-hacking" or "selective reporting". (You lot tin can read about a real-life instance of this here.)

While a study's statistical analysis usually accounts for the variables the researchers were trying to control for, p-values can as well be influenced (on purpose or not) by study design, hidden confounders, the types of statistical tests used, and much, much more. When evaluating the strength of a study'due south pattern, imagine yourself in the researcher's shoes and consider how you could torture a study to make it say what you lot want and accelerate your career in the process.

Physical Science Reading and Study Workbook Chapter 23

Source: https://examine.com/guides/how-to-read-a-study/

0 Response to "Physical Science Reading and Study Workbook Chapter 23"

Post a Comment